Addressing vulnerability management

-

Anyone working in the information and infrastructure security space will be more than familiar with the non-stop evolution that is vulnerability management. Seemingly on a daily basis, we see new attacks emerging, and those old mechanisms that you thought were well and truly dead resurface with “Frankenstein” like capabilities rendering your existing defences designed to combat that particular threat either inefficient, or in some cases, completely ineffective. All too often, we see previous campaigns resurface with newer destructive capabilities designed to extort both from the financial and blackmail perspective.It’s the function of the “Blue Team” to (in several cases) work around the clock to patch a security vulnerability identified in a system, and ensure that the technology landscape and estate is as healthy as is feasibly possible. On the flip side, it’s the function of the “Red Team” to identify hidden vulnerabilities in your systems and associated networks, and provide assistance around the remediation of the identified threat in a controlled manner.

Depending on your requirements, the minimum industry accepted testing frequency from the “Red Team” perspective is once per year, and typically involves the traditional “perimeter” (externally facing devices such as firewalls, routers, etc), websites, public facing applications, and anything else exposed to the internet. Whilst this satisfies the “tick in the box” requirement on infrastructure that traditionally never changes, is it really sufficient in today’s ever-changing environments ? The answer here is no.

With the arrival of flexible computing, virtual data centres, SaaS, IaaS, IoT, and literally every other acronym relating to technology comes a new level of risk. Evolution of system and application capabilities has meant that these very systems are in most cases self-learning (and for networks, self-healing). Application algorithms, Machine Learning, and Artificial Intelligence can all introduce an unintended vulnerability throughout the development lifecycle, therefore, failing to test, address, and validate the security of any new application or modern infrastructure that is public facing is a breach waiting to happen. For those “in the industry”, how many times have you been met with this very scenario

“Blue Team: We fixed the vulnerabilities that the Red Team said they’d found…” “Red Team: We found the vulnerabilities that the Blue Team said they’d fixed…”

Does this sound familiar ?

What I’m alluding to here is that security isn’t “fire and forget”. It’s a multi-faceted, complex process of evolution that, very much like the earth itself, is constantly spinning. Vulnerabilities evolve at an alarming rate, and unfortunately, your security program needs to evolve with it rather than simply “stopping” for even a short period of time. It’s surprising (and in all honesty, worrying) the amount of businesses that do not currently (and even worse, have no plans to) perform an internal vulnerability assessment. You’ll notice here I do not refer to this as a penetration test - you can’t “penetrate” something you are already sitting inside. The purpose of this exercise is to engage a third party vendor (subject to the usual Non-Disclosure Agreement process) for a couple of days. Let them sit directly inside your network, and see what they can discover. Topology maps and subnets help, but in reality, this is a discovery “mission” and it’s up to the tester in terms of how they handle the exercise.

The important component here is scope. Additionally, there are always boundaries. For example, I typically prefer a proof of concept rather than a tester blundering in and using a “capture the flag” approach that could cause significant disruption or damage to existing processes - particularly in-house development. It’s vital that you “set the tone” of what is acceptable, and what you expect to gain from the exercise at the beginning of the engagement. Essentially, the mantra here is that the evolution wheel in fact never stops - it’s why security personnel are always busy, and CISO’s never sleep

These days, a pragmatic approach is essential in order to manage a security framework properly. Gone are the days of annual testing alone, being dismissive around “low level” threats without fully understanding their capabilities, and brushing identified vulnerabilities “under the carpet”. The annual testing still holds significant value, but only if undertaken by an independent body, such as those accredited by CREST (for example).

You can reduce the exposure to risk in your own environment by creating your own security framework, and adopting a frequent vulnerability scanning schedule with self remediation. Not only does this lower the risk to your overall environment, but also provides the comfort that you take security seriously to clients and vendors alike whom conduct frequent assessments as part of their Due Diligence programs. Identifying vulnerabilities is one thing, however, remediating them is another. You essentially need to “find a balance” in terms of deciding which comes first. The obvious route is to target critical, high, and medium risk, whilst leaving the “low risk” items behind, or on the “back burner”.

The issue with this approach is that it’s perfectly possible to chain multiple vulnerabilities together that on their own would be classed as low risk, and end up with something much more sinister when combined. This is why it’s important to address even low-risk vulnerabilities to see how easy it would be to effectively execute these inside your environment. In reality, no Red Team member can tell you exactly how any threat could pan out if a way to exploit it silently existed in your environment without a proof of concept exercise - that, and the necessity sometimes for a “perfect storm” that makes the previous statement possible in a production environment.

Vulnerability assessments rely on attitude to risk at their core. If the attitude is classed as low for a high risk threat, then there needs to be a responsible person capable of enforcing an argument for that particular threat to be at the top of the remediation list. This is often the challenge - where board members will accept a level of risk because the remediation itself may impact a particular process, or interfere with a particular development cycle - mainly because they do not understand the implication of weakened security over desired functionality.

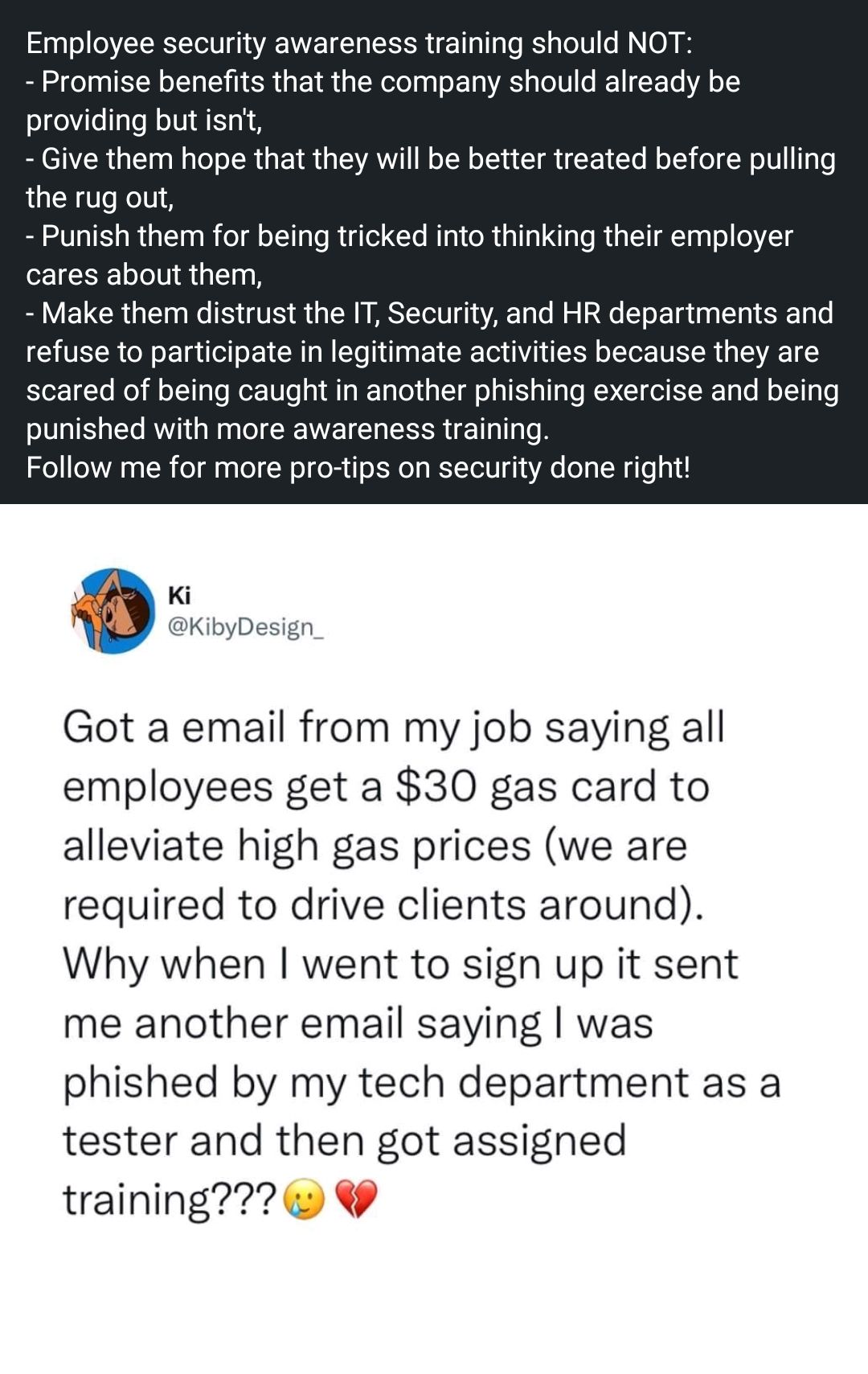

For any security program to be complete (as far as is possible), it also needs to consider the fundamental weakest link in any organisation - the user. Whilst this sounds harsh, the below statement is always true

“A malicious actor can send 1,000 emails to random users, but only needs one to actually click a link to gain a foothold into an organisation”

For this reason, any internal vulnerability assessment program should also factor in social engineering, phishing simulations, vishing, eavesdropping (water cooler / kitchen chat), unattended documents left on copiers, dropping a USB thumb drive in reception or “public” (in the sense of the firm) areas.

There’s a lot more to this topic than this article alone can sanely cover. After several years experience in Information and Infrastructure Security, I’ve seen my fair share of inadequate processes and programs, and it’s time we addressed the elephant in the room.

Got you thinking about your own security program ?

Hello! It looks like you're interested in this conversation, but you don't have an account yet.

Getting fed up of having to scroll through the same posts each visit? When you register for an account, you'll always come back to exactly where you were before, and choose to be notified of new replies (either via email, or push notification). You'll also be able to save bookmarks and upvote posts to show your appreciation to other community members.

With your input, this post could be even better 💗

Register Login