NODEBB: Nginx error performance & High CPU

-

I don’t understand all you say.

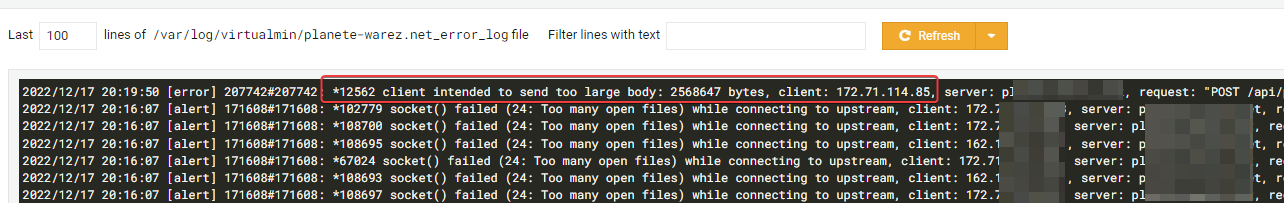

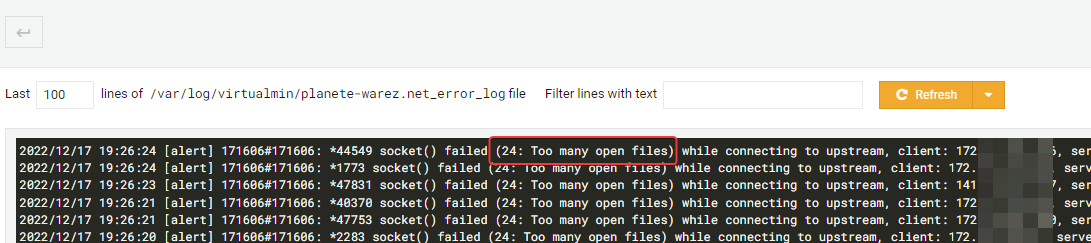

Finally what we can do ?Actually we have 1.1k users online

We have a lot of inscriptions

@phenomlab 362 user inscription in two days and many user on just read forum

-

I don’t understand all you say.

Finally what we can do ?Actually we have 1.1k users online

We have a lot of inscriptions

@DownPW said in NODEBB: Nginx error performance & High CPU:

I don’t understand all you say.

Finally what we can do ?My point here is that the traffic, whilst legitimate in the sense that it’s from another site that has closed, could still be nefarious in nature so you should keep your guard up. However, a number of signups can’t be wrong - particularly if they are actually posting content and not performing requests that actually do not pertain to available URL’s on your site.

I see no indication of that, so the comfort level in the sense that it’s legitimate traffic does increase somewhat accompanied by the seemingly legitimate registrations. However, because all the source IP addresses and within the Cloudflare ranges, you have no ability to tell really who they are without performing the steps I outlined in the previous post.

The good news is that your site just got a huge increase in popularity, but with that will always be a need to keep a close eye on activity. It would only take one nefarious actor to potentially bring down your site.

The nginx configuration you’ve applied will indeed alleviate the stress placed on the server but is a double edged sword in the sense that it does make the goalpost much wider in terms of any potential attack.

My advice herein would be to not scale these settings too high. Use sane judgement.

For the NodeBB side, I know they have baked rate limiting into the product but I’m sure you can actually change that behaviour.

Have a look at

/admin/settings/advanced#traffic-managementYou’ll probably need to play with the values here to get a decent balance, but this is where I’d start.

-

@DownPW said in NODEBB: Nginx error performance & High CPU:

I don’t understand all you say.

Finally what we can do ?My point here is that the traffic, whilst legitimate in the sense that it’s from another site that has closed, could still be nefarious in nature so you should keep your guard up. However, a number of signups can’t be wrong - particularly if they are actually posting content and not performing requests that actually do not pertain to available URL’s on your site.

I see no indication of that, so the comfort level in the sense that it’s legitimate traffic does increase somewhat accompanied by the seemingly legitimate registrations. However, because all the source IP addresses and within the Cloudflare ranges, you have no ability to tell really who they are without performing the steps I outlined in the previous post.

The good news is that your site just got a huge increase in popularity, but with that will always be a need to keep a close eye on activity. It would only take one nefarious actor to potentially bring down your site.

The nginx configuration you’ve applied will indeed alleviate the stress placed on the server but is a double edged sword in the sense that it does make the goalpost much wider in terms of any potential attack.

My advice herein would be to not scale these settings too high. Use sane judgement.

For the NodeBB side, I know they have baked rate limiting into the product but I’m sure you can actually change that behaviour.

Have a look at

/admin/settings/advanced#traffic-managementYou’ll probably need to play with the values here to get a decent balance, but this is where I’d start.

I think you’re right Mark and that’s why I come here looking for your valuable advice and expertise

Basically, the illegal site that closed was a movie download site A topic was opened on our forum to talk about it and many came looking for answers on why and how.

You’re actually right about the fact that we can’t be sure of anything and there are bot attacks or ddos in the lot of connexions

I activated the under attack mode on Cloudflare as you advised me to see (just now.) and we will see like you said

As you advised, I also reset the default nginx configuration values and removed my nginx modifications specified above.

I would like to take advantage of your expertise, see a hand from you to properly configure nginx for ddos and high traffic. (What precise modifications to specify as well as the precise values.)

-

I think you’re right Mark and that’s why I come here looking for your valuable advice and expertise

Basically, the illegal site that closed was a movie download site A topic was opened on our forum to talk about it and many came looking for answers on why and how.

You’re actually right about the fact that we can’t be sure of anything and there are bot attacks or ddos in the lot of connexions

I activated the under attack mode on Cloudflare as you advised me to see (just now.) and we will see like you said

As you advised, I also reset the default nginx configuration values and removed my nginx modifications specified above.

I would like to take advantage of your expertise, see a hand from you to properly configure nginx for ddos and high traffic. (What precise modifications to specify as well as the precise values.)

@DownPW ok, good. Let’s see what the challenge does to the site traffic. Those whom are legitimate users won’t mind having to perform a one time additional authentication step, but bots of course will simply stumble at this hurdle.

-

@DownPW ok, good. Let’s see what the challenge does to the site traffic. Those whom are legitimate users won’t mind having to perform a one time additional authentication step, but bots of course will simply stumble at this hurdle.

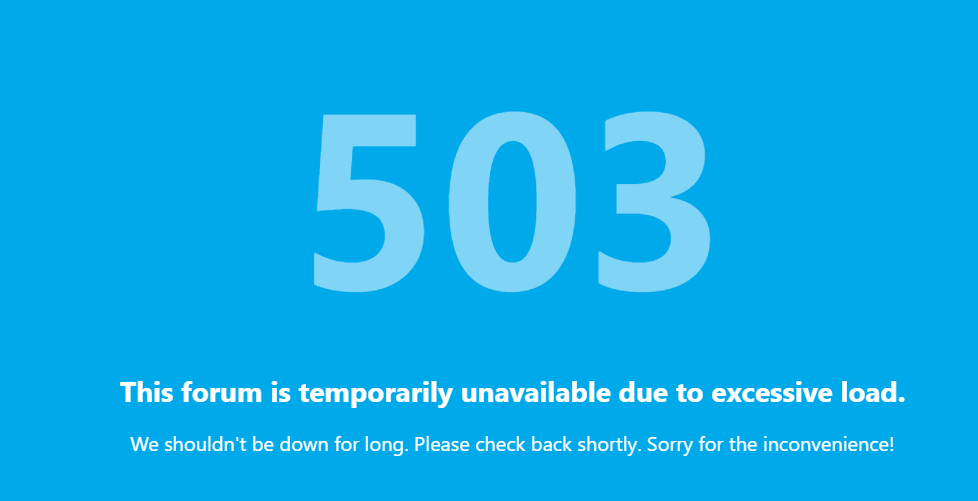

number of user is better (408) but a lot of loose connexion. navigation is hard

-

number of user is better (408) but a lot of loose connexion. navigation is hard

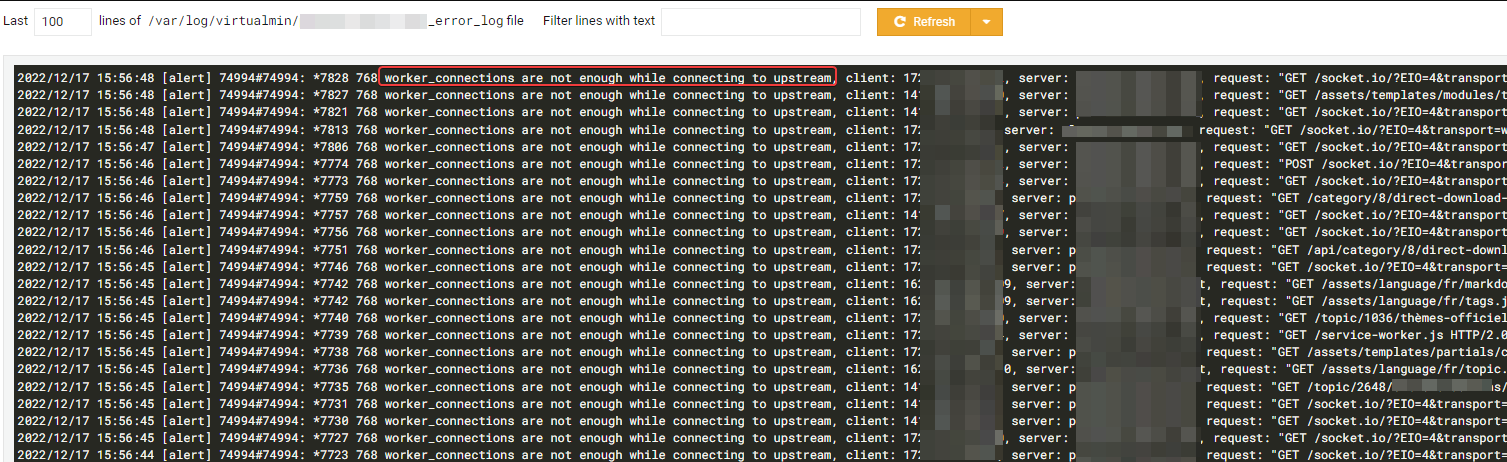

I have chaneg nginx conf with :

worker_rlimit_nofile 70000;

events {

worker_connections 65535;

multi_accept on;

}CF is under attack mode

-

I have chaneg nginx conf with :

worker_rlimit_nofile 70000;

events {

worker_connections 65535;

multi_accept on;

}CF is under attack mode

@DownPW I still have access to your Cloudflare tenant so will have a look shortly.

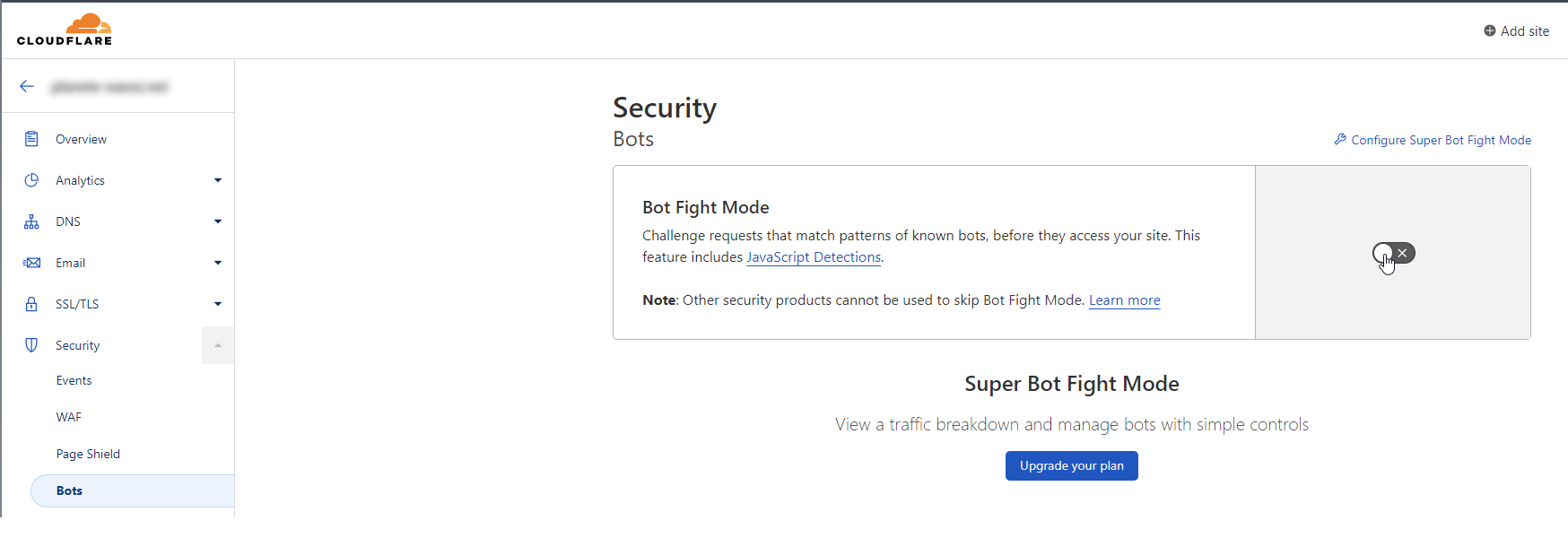

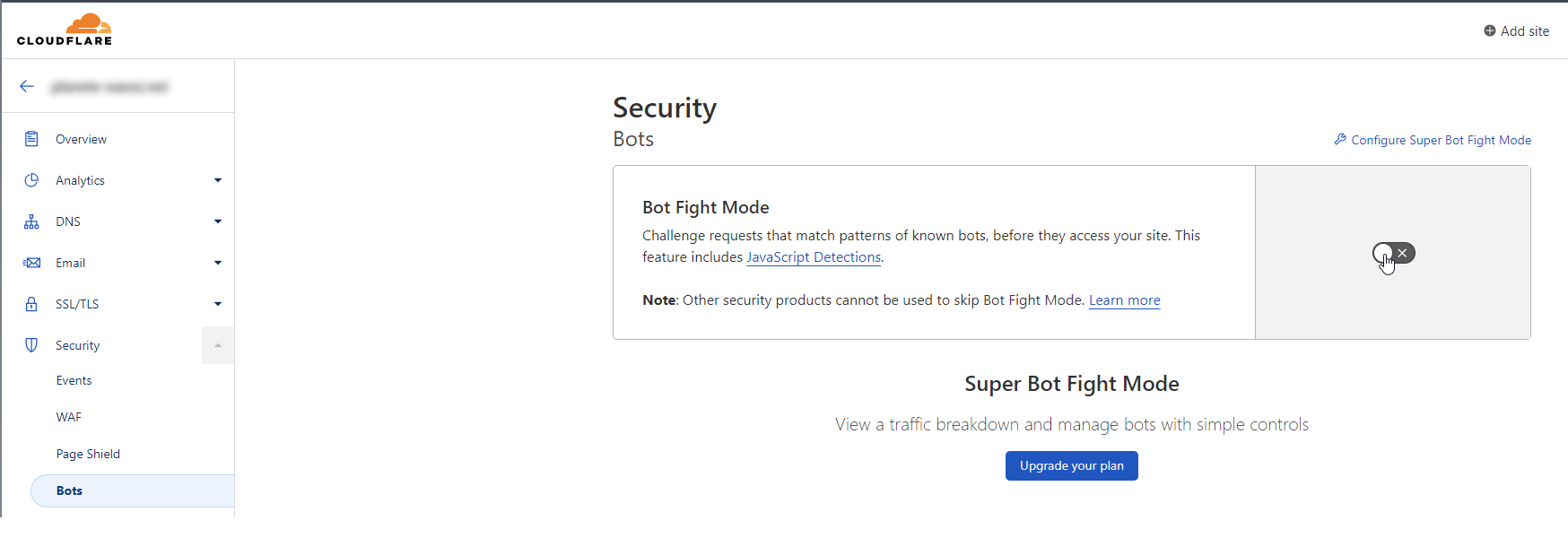

EDIT: I am in now - personally, I would also enable this (and configure it)

-

@DownPW I still have access to your Cloudflare tenant so will have a look shortly.

EDIT: I am in now - personally, I would also enable this (and configure it)

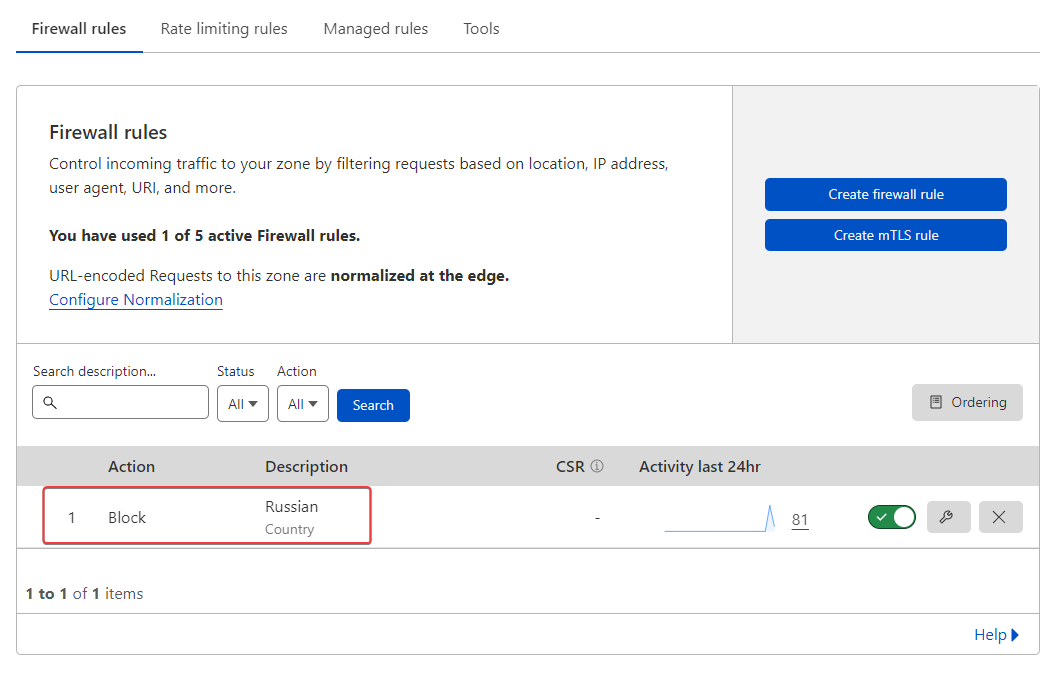

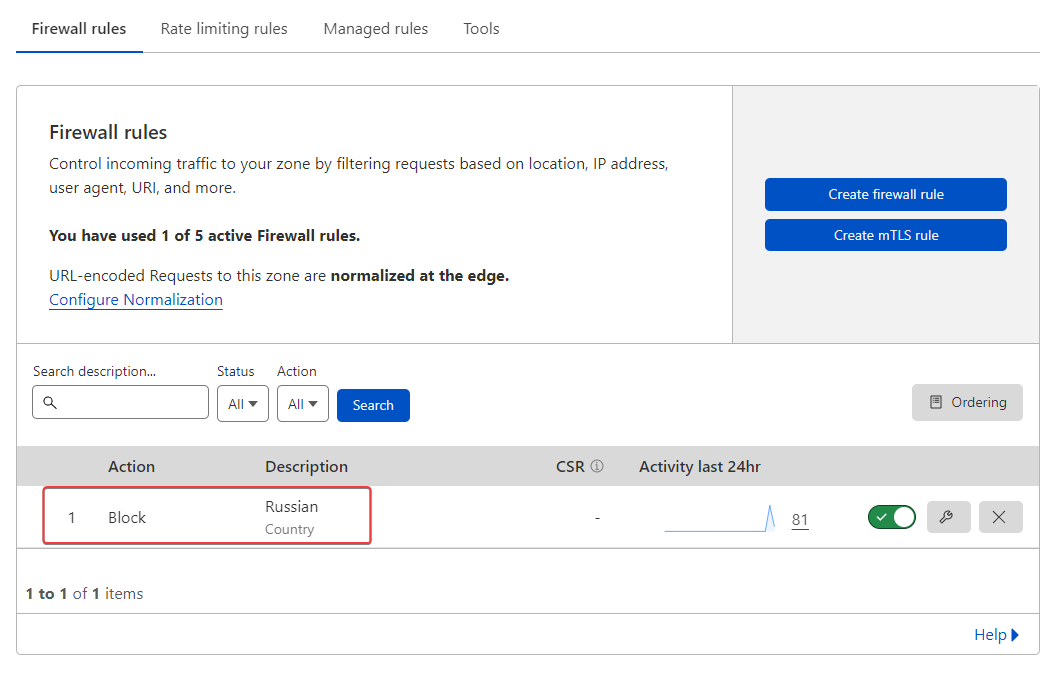

@phenomlab I have already activate it and add a waf rules for russian country

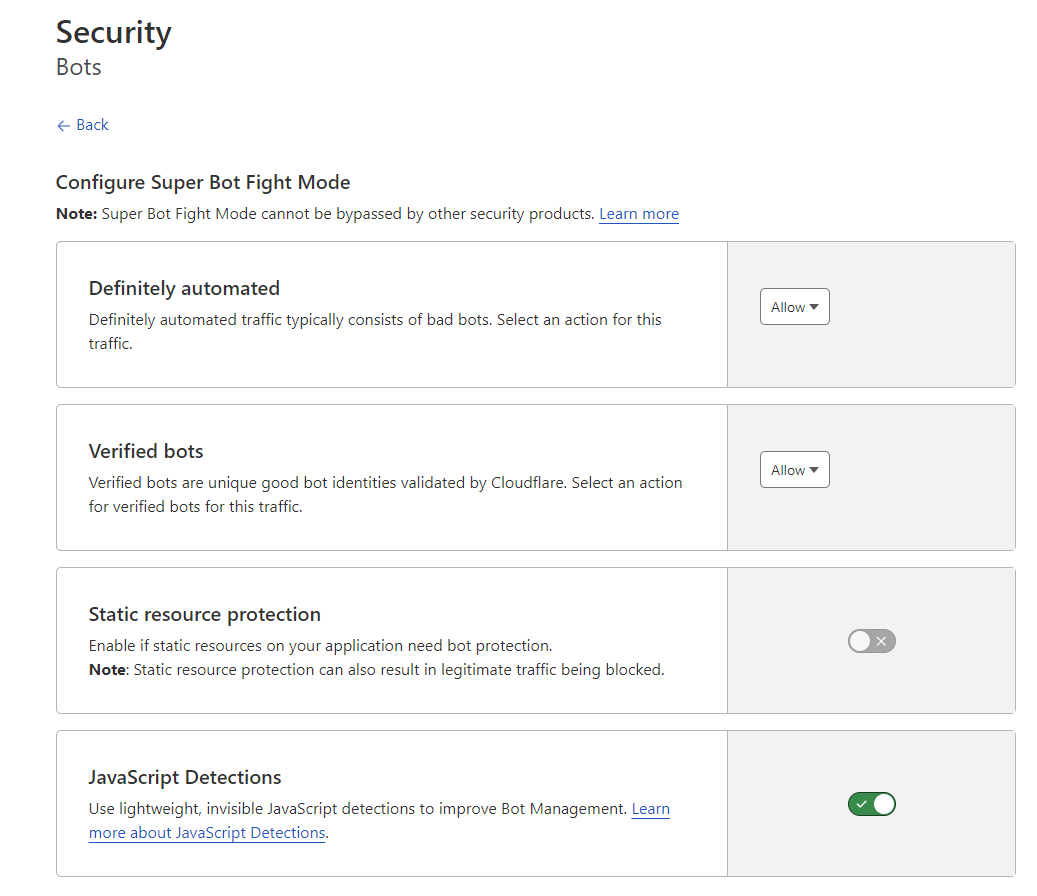

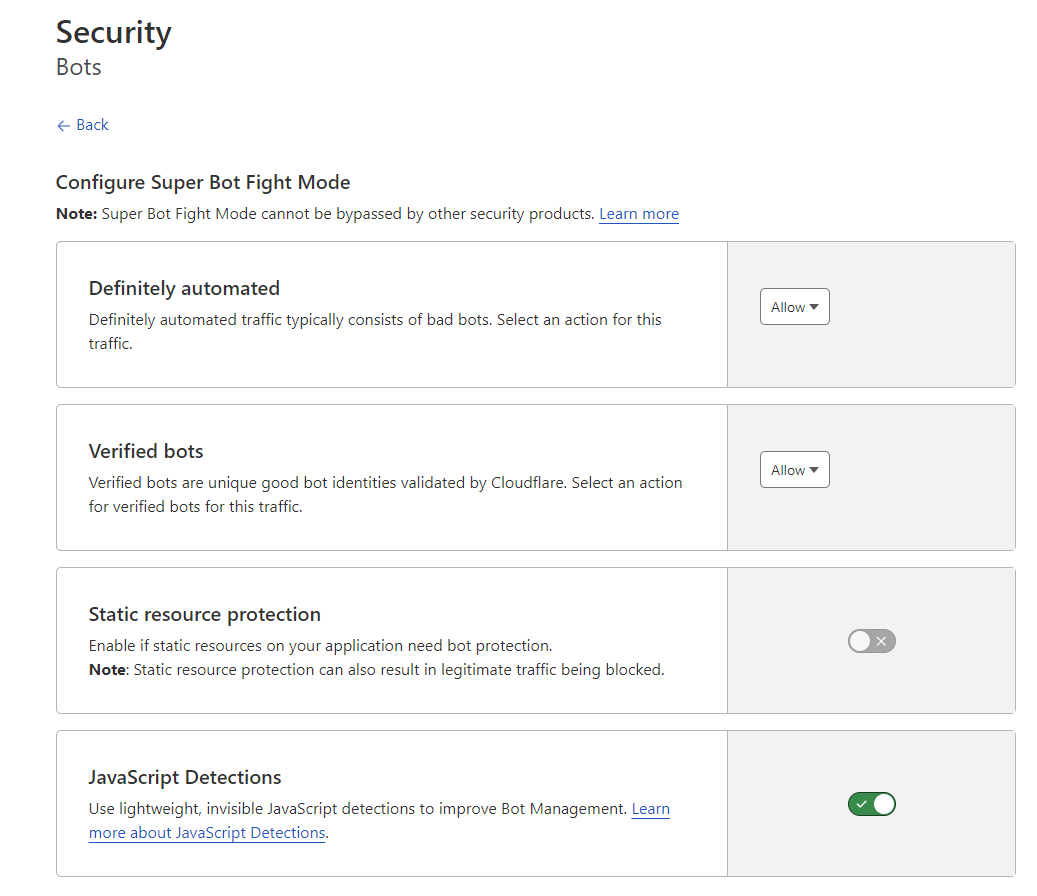

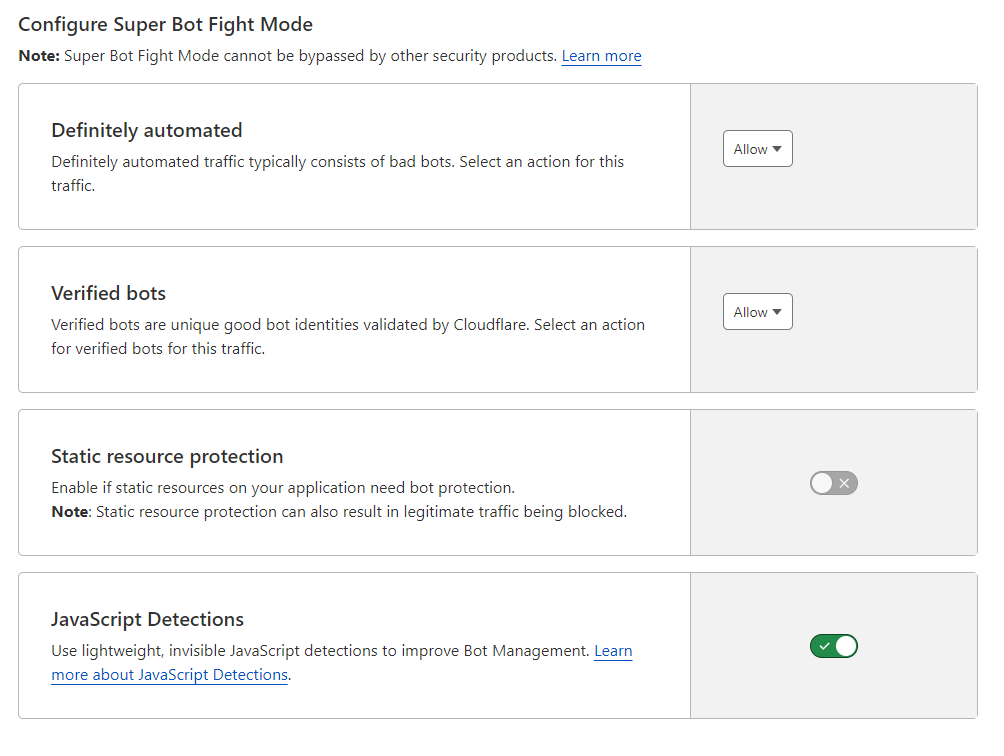

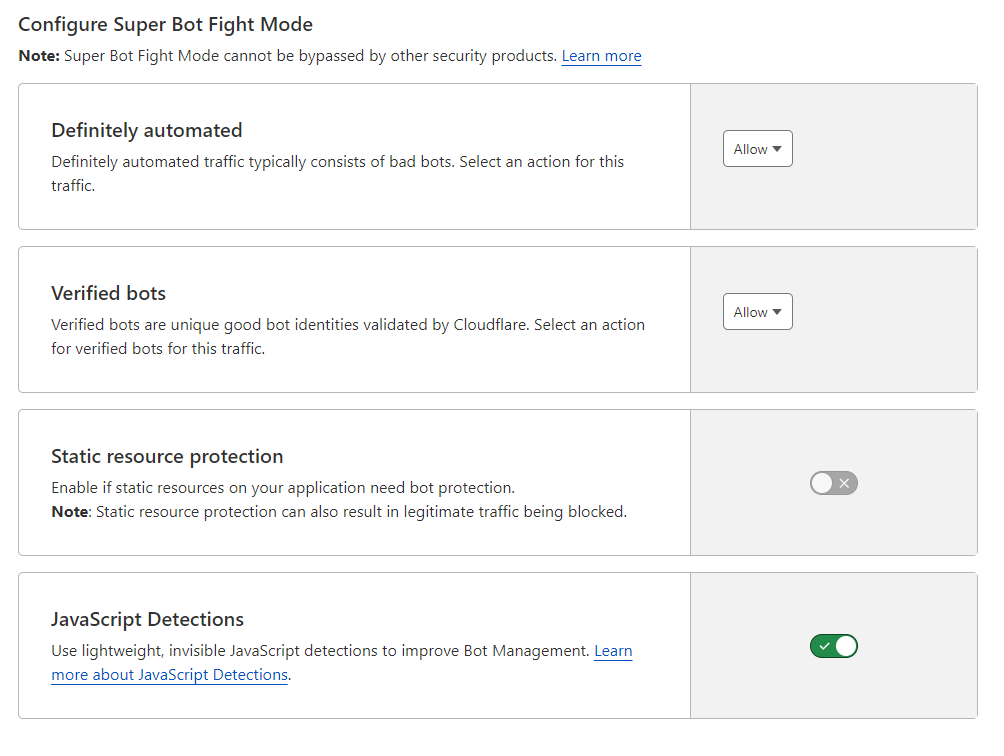

With this bots settings :

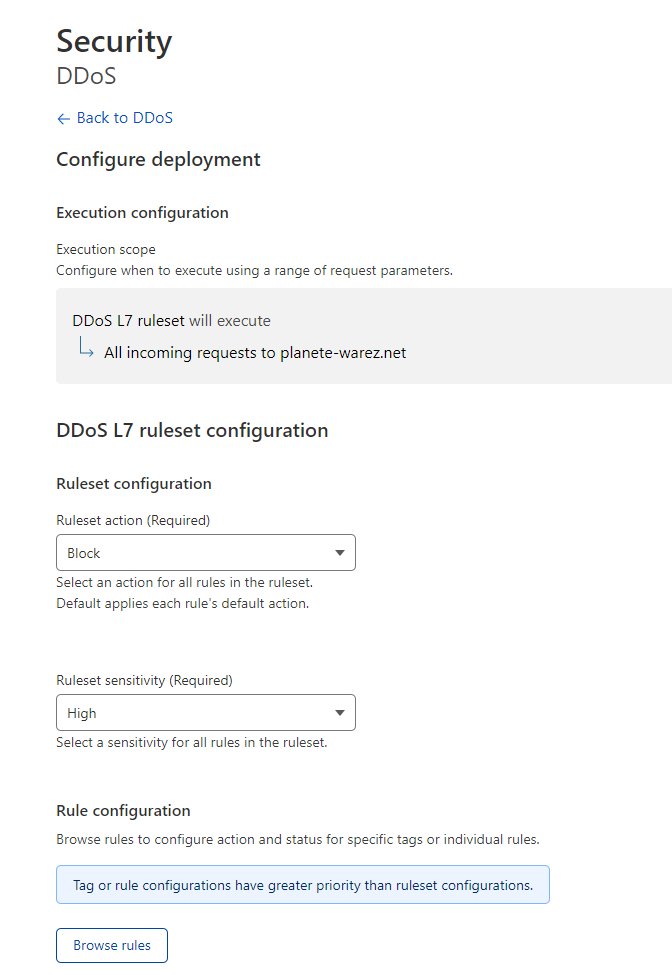

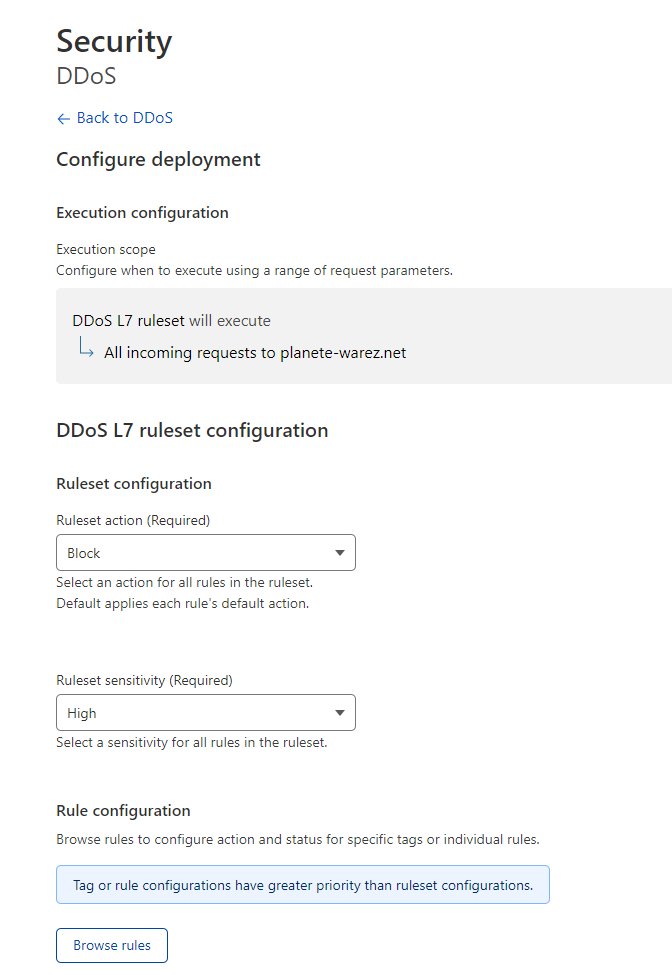

and this settings for ddos protection :

-

@phenomlab I have already activate it and add a waf rules for russian country

With this bots settings :

and this settings for ddos protection :

@DownPW said in NODEBB: Nginx error performance & High CPU:

I have already activate it

Are you sure? When I checked your tenant it wasn’t active - it’s from where I took the screenshot

-

@DownPW said in NODEBB: Nginx error performance & High CPU:

I have already activate it

Are you sure? When I checked your tenant it wasn’t active - it’s from where I took the screenshot

yep I activate it after

-

yep I activate it after

For your information @phenomlab ,

- I have ban via iptables suspicious ip address find on /etc/nginx/accesss.log and virtualhost access.log like this :

iptables -I INPUT -s IPADDRESS -j DROP - Activate bot option on CF

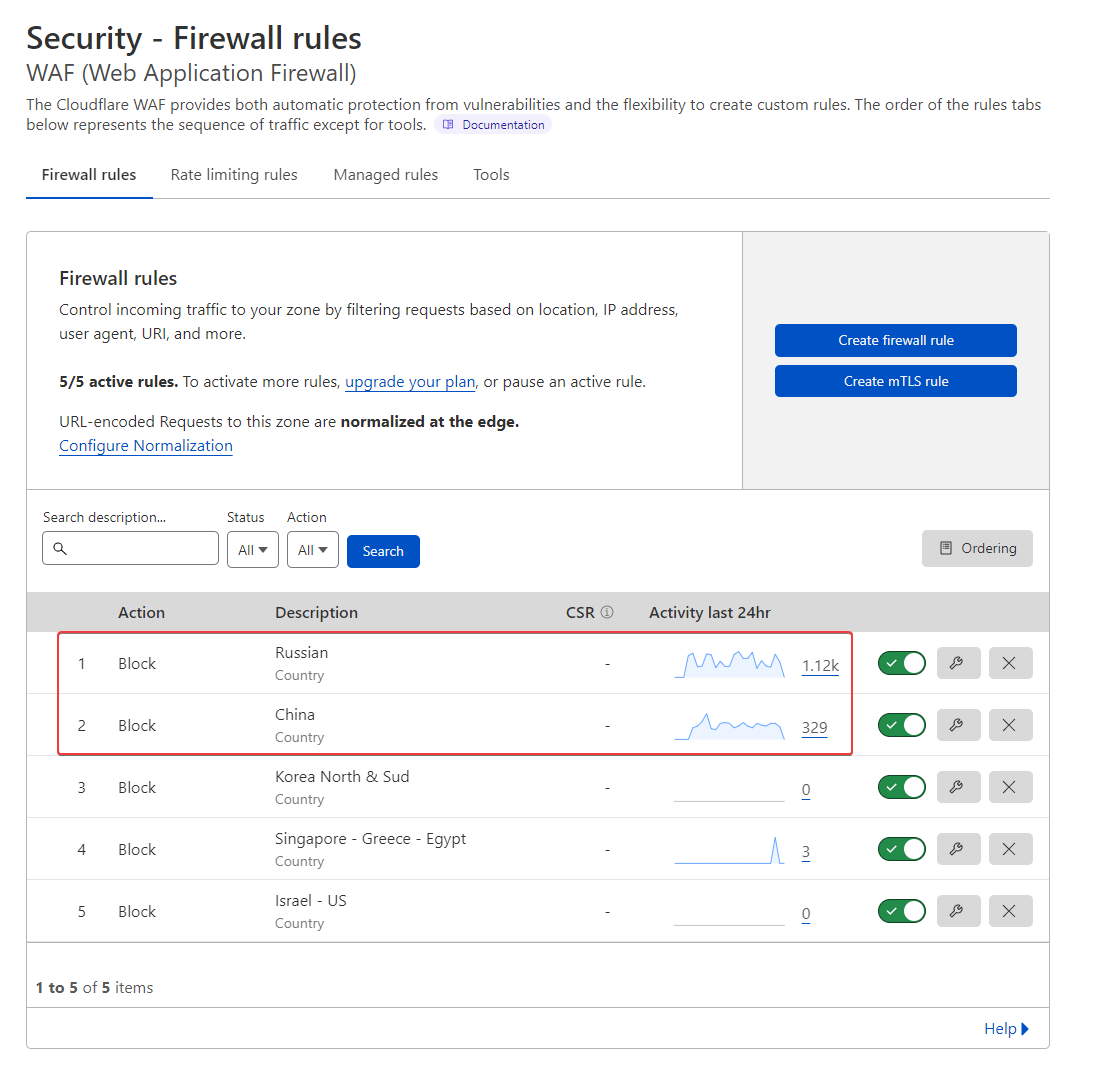

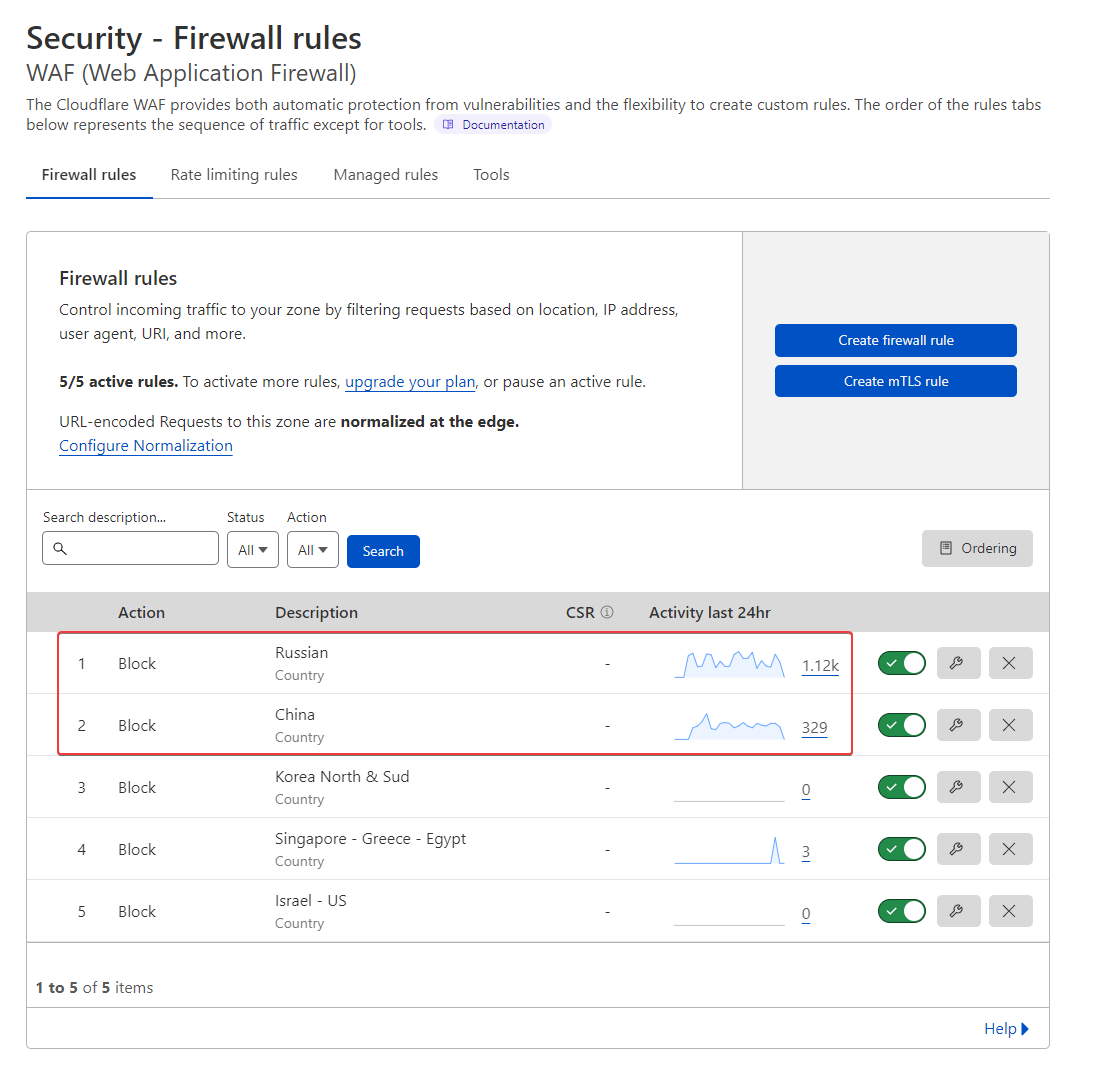

- Create contry rules (Russie and China) on CF WAF

- I left under attack mode option activated on CF

- I have just change nginx.conf like this for test (If you have best value, I take it ! ) :

worker_rlimit_nofile 70000; events { worker_connections 65535; multi_accept on; } http { ## # Basic Settings ## limit_req_zone $binary_remote_addr zone=flood:10m rate=100r/s; limit_req zone=flood burst=100 nodelay; limit_conn_zone $binary_remote_addr zone=ddos:10m; limit_conn ddos 100;100r/s iit’s already a lot !!

and for vhost file :

server { ..... location / { limit_req zone=flood; #Test limit_conn ddos 100; #Test }–> If you have other ideas, I’m interested

–> If you have better values to use in what I modified, please let me know. - I have ban via iptables suspicious ip address find on /etc/nginx/accesss.log and virtualhost access.log like this :

-

For your information @phenomlab ,

- I have ban via iptables suspicious ip address find on /etc/nginx/accesss.log and virtualhost access.log like this :

iptables -I INPUT -s IPADDRESS -j DROP - Activate bot option on CF

- Create contry rules (Russie and China) on CF WAF

- I left under attack mode option activated on CF

- I have just change nginx.conf like this for test (If you have best value, I take it ! ) :

worker_rlimit_nofile 70000; events { worker_connections 65535; multi_accept on; } http { ## # Basic Settings ## limit_req_zone $binary_remote_addr zone=flood:10m rate=100r/s; limit_req zone=flood burst=100 nodelay; limit_conn_zone $binary_remote_addr zone=ddos:10m; limit_conn ddos 100;100r/s iit’s already a lot !!

and for vhost file :

server { ..... location / { limit_req zone=flood; #Test limit_conn ddos 100; #Test }–> If you have other ideas, I’m interested

–> If you have better values to use in what I modified, please let me know.@DownPW my only preference would be to not set

worker_connectionsso high - I have ban via iptables suspicious ip address find on /etc/nginx/accesss.log and virtualhost access.log like this :

-

Ok so what value do you advise?

-

Ok so what value do you advise?

@DownPW you should base it on the output of

ulimit- see belowWith that high value you run the risk of overwhelming your server.

-

@DownPW you should base it on the output of

ulimit- see belowWith that high value you run the risk of overwhelming your server.

-

@DownPW And the

worker_processesvalue ? I expect this to be between 1 and 4 ? -

worker_processes auto; -

worker_processes auto;@DownPW ok. You should refer to that some article I previously provided. You can probably set this to a static value.

-

@DownPW ok. You should refer to that some article I previously provided. You can probably set this to a static value.

Ok I will see it for better worker_processes value

I add a rate limite request and limit_conn_zone on http block and vhost block :

– nginx.conf:

http { #Requete maximun par ip limit_req_zone $binary_remote_addr zone=flood:10m rate=100r/s; #Connexions maximum par ip limit_conn_zone $binary_remote_addr zone=ddos:1m;-- vhost.conf : location / { limit_req zone=flood burst=100 nodelay; limit_conn ddos 10;–> I have test other value for rate and burst but they cause problem access to the forum. If you have better, I take it

I add today a proxy_read_timeout on vhost.conf (60 by default)

proxy_read_timeout 180;I have deactivate underattack mode on CF and change for high Level

I have add other rules on CF waf :

-

Ok I will see it for better worker_processes value

I add a rate limite request and limit_conn_zone on http block and vhost block :

– nginx.conf:

http { #Requete maximun par ip limit_req_zone $binary_remote_addr zone=flood:10m rate=100r/s; #Connexions maximum par ip limit_conn_zone $binary_remote_addr zone=ddos:1m;-- vhost.conf : location / { limit_req zone=flood burst=100 nodelay; limit_conn ddos 10;–> I have test other value for rate and burst but they cause problem access to the forum. If you have better, I take it

I add today a proxy_read_timeout on vhost.conf (60 by default)

proxy_read_timeout 180;I have deactivate underattack mode on CF and change for high Level

I have add other rules on CF waf :

@DownPW what settings do you have in advanced (in settings) for rate limit etc?

Hello! It looks like you're interested in this conversation, but you don't have an account yet.

Getting fed up of having to scroll through the same posts each visit? When you register for an account, you'll always come back to exactly where you were before, and choose to be notified of new replies (either via email, or push notification). You'll also be able to save bookmarks and upvote posts to show your appreciation to other community members.

With your input, this post could be even better 💗

Register Login