OGProxy - a replacement for iFramely

-

Can’t wait

-

@DownPW Coming soon…

-

A release date maybe ?

-

@DownPW in the coming weeks.

-

@DownPW sorry for the delay here - real life is getting in the way, but the latest release will be pushed out soon.

-

no problem.

I was waiting for this new version to change my server but I think I’m going to install the old one.Keep the good work bro

-

@DownPW sorry for the delay here - real life is getting in the way, but the latest release will be pushed out soon.

@DownPW sorry for the delay here - real life is getting in the way, but the latest release will be pushed out soon.

Is the new version still in development @phenomlab ?

I ask because personally, I think OG Proxy is really cool, but it uses a lot of RAM on the server, sometimes up to 2GB.

That’s quite a lot.Optimizing it in this area would be really great.

-

I have a pro account of Claude AI and i Tell him to optimize the code for the problem memory conssumtion)

– here his answer :

Root causes of the 2 GB RAM usage

1. Unbounded cache (

memory-cache)The original code stores every visited URL forever, with no cap and no reliable automatic expiration. On an active forum with thousands of unique links, this grows indefinitely.

Fix →

lru-cache: maximum 500 entries (configurable viaCACHE_MAX), automatic TTL expiration at 1 hour. Least-recently-used entries are evicted automatically.2. Redundant fetches per request

For every uncached URL, the original performs: OGS scrape + axios (full page) + cheerio + meta-parser + axios (favicon). If multiple users click the same link simultaneously, all of these are duplicated.

Fix → In-flight request deduplication (

inFlightMap): if 5 requests arrive foryoutube.com/xxxat the same time, only one fetch is fired — the other 4 await the same promise.3. Unbounded favicon buffering

The original downloads any favicon as an arraybuffer without checking its size first. A favicon URL pointing to a large image can load several MB into memory.

Fix → HEAD request first to check

Content-Length, then skip if > 50 KB (FAVICON_MAX). The HTML response body is also explicitly freed (htmlRes.data = null) once parsed.4. Axios with no timeout or size limit

Without a timeout, slow requests remain suspended in memory indefinitely. Without

maxContentLength, a 50 MB page is fully loaded into RAM.Fix →

REQ_TIMEOUT=8000msandMAX_BODY=2MBper outbound request.5. No compression

Without gzip, large JSON responses consume more bandwidth and remain in transit longer.

Fix →

compressionmiddleware.

Near-certain gains:

- The bounded LRU cache will prevent unbounded memory growth. This is most likely the main cause of the 2 GB if your forum has a large number of unique links.

- Timeouts will prevent stalled requests from sitting in memory indefinitely.

- Page and favicon size limits will eliminate pathological edge cases.

Traffic-dependent gains:

- In-flight request deduplication only helps if multiple users click the same link simultaneously — very useful on a busy forum, negligible on a small one.

What may not change:

- If the 2 GB comes from something else (a leak inside

open-graph-scraperitself, or Node.js not returning memory to the OS after objects are freed), this won’t fix everything. Node.js tends to hold onto allocated memory even after objects are released — this is normal V8 behaviour.

In that case, a simple complementary solution is to add an automatic nightly restart to the systemd service:

[Service] Restart=always RuntimeMaxSec=86400 # restart after 24h maxThis doesn’t fix the root cause but prevents accumulation over several days.

– The JS Code (server.js) :

"use strict"; /** * OGProxy - Optimized server * Improvements over original: * - LRU cache (bounded) instead of unbounded memory-cache * - Request deduplication (coalescing) to avoid redundant fetches * - Compression middleware * - Rate limiting * - Helmet security headers * - Axios timeout + max response size * - Favicon size cap + skip if too large * - Graceful shutdown * - Winston structured logging * - Joi input validation * - .env support * - Health check endpoint */ require("dotenv").config(); const express = require("express"); const cors = require("cors"); const helmet = require("helmet"); const compression = require("compression"); const rateLimit = require("express-rate-limit"); const morgan = require("morgan"); const winston = require("winston"); const { LRUCache }= require("lru-cache"); const Joi = require("joi"); const axios = require("axios"); const ogs = require("open-graph-scraper"); const cheerio = require("cheerio"); const metaParser = require("meta-parser"); const { URL } = require("url"); const path = require("path"); // ─── Config ──────────────────────────────────────────────────────────────── const PORT = parseInt(process.env.PORT || "2000", 10); const API_KEY = process.env.API_KEY || "YOUR_API_KEY_HERE"; const ORIGIN = process.env.ORIGIN || "https://your-forum.example.com"; const CACHE_MAX = parseInt(process.env.CACHE_MAX || "500", 10); // max entries const CACHE_TTL = parseInt(process.env.CACHE_TTL || "3600", 10) * 1000; // ms (default 1h) const REQ_TIMEOUT = parseInt(process.env.REQ_TIMEOUT || "8000", 10); // ms per outbound request const MAX_BODY = parseInt(process.env.MAX_BODY || "2", 10) * 1024 * 1024; // MB → bytes const FAVICON_MAX = parseInt(process.env.FAVICON_MAX || "51200",10); // bytes (50 KB) const RATE_WINDOW = parseInt(process.env.RATE_WINDOW || "60", 10) * 1000; // ms const RATE_LIMIT = parseInt(process.env.RATE_LIMIT || "30", 10); // req per window // ─── Logger ──────────────────────────────────────────────────────────────── const logger = winston.createLogger({ level: process.env.LOG_LEVEL || "info", format: winston.format.combine( winston.format.timestamp(), winston.format.errors({ stack: true }), winston.format.json() ), transports: [ new winston.transports.Console({ format: winston.format.simple() }), new winston.transports.File({ filename: "error.log", level: "error" }), new winston.transports.File({ filename: "access.log" }), ], }); // ─── LRU Cache (bounded) ─────────────────────────────────────────────────── const cache = new LRUCache({ max: CACHE_MAX, // max number of items ttl: CACHE_TTL, // auto-expire entries updateAgeOnGet: false, }); // ─── In-flight request deduplication ────────────────────────────────────── // If two requests arrive for the same URL before the first completes, // the second one waits for the first promise instead of spawning a new fetch. const inFlight = new Map(); // url → Promise // ─── Axios instance (shared, keep-alive, bounded) ───────────────────────── const http = require("http"); const https = require("https"); const axiosInstance = axios.create({ timeout: REQ_TIMEOUT, maxContentLength: MAX_BODY, maxBodyLength: MAX_BODY, headers: { "User-Agent": "Mozilla/5.0 (compatible; OGProxy/2.0; +https://github.com/phenomlab/ogproxy)", "Accept-Language": "en-US,en;q=0.9", }, httpAgent: new http.Agent ({ keepAlive: true, maxSockets: 10 }), httpsAgent: new https.Agent({ keepAlive: true, maxSockets: 10 }), }); // ─── Input validation ───────────────────────────────────────────────────── const urlSchema = Joi.object({ url: Joi.string().uri({ scheme: ["http", "https"] }).required(), }); // ─── App setup ──────────────────────────────────────────────────────────── const app = express(); // Security headers app.use(helmet({ contentSecurityPolicy: false })); // CORS app.use(cors({ origin: ORIGIN })); // Gzip compression app.use(compression()); // HTTP request logging (stream to winston) app.use(morgan("combined", { stream: { write: (msg) => logger.info(msg.trim()) }, })); // Rate limiting per IP app.use( "/ogproxy", rateLimit({ windowMs: RATE_WINDOW, max: RATE_LIMIT, standardHeaders: true, legacyHeaders: false, message: { error: "Too many requests, please try again later." }, }) ); // Static images directory app.use("/images", express.static(path.join(__dirname, "images"))); // ─── Health check ───────────────────────────────────────────────────────── app.get("/health", (_req, res) => { res.json({ status: "ok", uptime: process.uptime(), cacheSize: cache.size, inFlight: inFlight.size, memory: process.memoryUsage(), }); }); // ─── API key middleware ──────────────────────────────────────────────────── function requireApiKey(req, res, next) { const key = req.headers["x-api-key"]; if (!key || key !== API_KEY) { return res.status(401).json({ error: "Unauthorized" }); } next(); } // ─── Core fetch logic ───────────────────────────────────────────────────── async function fetchOGData(targetUrl) { // 1. OGS scrape const { result: ogsResult, error: ogsError } = await ogs({ url: targetUrl }); if (ogsError) throw new Error(`OGS failed: ${ogsError}`); // 2. Fetch HTML (with size cap) let metadata = {}; let faviconB64 = null; try { const htmlRes = await axiosInstance.get(targetUrl, { responseType: "text", decompress: true, }); const $ = cheerio.load(htmlRes.data); // meta-parser on the raw HTML try { const parsed = metaParser(htmlRes.data); metadata = parsed || {}; } catch (_) { /* non-fatal */ } // 3. Favicon – only fetch if small enough let faviconHref = $('link[rel="icon"]').attr("href") || $('link[rel="shortcut icon"]').attr("href"); if (faviconHref) { try { const base = new URL(targetUrl); const faviconUrl = new URL(faviconHref, base).href; // HEAD first to check Content-Length before downloading const headRes = await axiosInstance.head(faviconUrl).catch(() => null); const contentLength = headRes ? parseInt(headRes.headers["content-length"] || "0", 10) : 0; if (contentLength === 0 || contentLength <= FAVICON_MAX) { const iconRes = await axiosInstance.get(faviconUrl, { responseType: "arraybuffer", maxContentLength: FAVICON_MAX, }); const mime = iconRes.headers["content-type"] || "image/x-icon"; faviconB64 = `data:${mime};base64,` + Buffer.from(iconRes.data).toString("base64"); } else { logger.info(`Favicon too large (${contentLength}B), skipping: ${faviconUrl}`); } } catch (err) { logger.warn("Favicon fetch failed", { url: targetUrl, err: err.message }); } } // Free the HTML string early htmlRes.data = null; } catch (err) { logger.warn("HTML fetch failed (using OGS only)", { url: targetUrl, err: err.message, }); } return { ...ogsResult, metaProperties: metadata, faviconUrl: faviconB64, }; } // ─── /ogproxy route ─────────────────────────────────────────────────────── app.get("/ogproxy", requireApiKey, async (req, res, next) => { try { // Validate input let { url: targetUrl } = req.query; const { error } = urlSchema.validate({ url: targetUrl }); if (error) { return res .status(400) .json({ error: `Invalid URL: ${error.details[0].message}` }); } // Normalise (strip trailing slash etc.) targetUrl = new URL(targetUrl).href; // Cache hit? const cached = cache.get(targetUrl); if (cached) { res.setHeader("X-Cache", "HIT"); return res.json(cached); } res.setHeader("X-Cache", "MISS"); // Deduplicate concurrent requests for the same URL if (!inFlight.has(targetUrl)) { const promise = fetchOGData(targetUrl) .then((data) => { cache.set(targetUrl, data); return data; }) .finally(() => inFlight.delete(targetUrl)); inFlight.set(targetUrl, promise); } const data = await inFlight.get(targetUrl); return res.json(data); } catch (err) { next(err); } }); // ─── Centralised error handler ──────────────────────────────────────────── // eslint-disable-next-line no-unused-vars app.use((err, _req, res, _next) => { logger.error("Unhandled error", { message: err.message, stack: err.stack }); res.status(500).json({ error: "Internal server error" }); }); // ─── Start ──────────────────────────────────────────────────────────────── const server = app.listen(PORT, () => { logger.info(`OGProxy listening on port ${PORT}`); logger.info(`Cache: max=${CACHE_MAX} entries, TTL=${CACHE_TTL / 1000}s`); }); // ─── Graceful shutdown ──────────────────────────────────────────────────── function shutdown(signal) { logger.info(`${signal} received – shutting down gracefully`); server.close(() => { logger.info("HTTP server closed"); process.exit(0); }); // Force exit after 10 s if still busy setTimeout(() => { logger.warn("Forcing exit after timeout"); process.exit(1); }, 10_000).unref(); } process.on("SIGTERM", () => shutdown("SIGTERM")); process.on("SIGINT", () => shutdown("SIGINT")); process.on("uncaughtException", (err) => { logger.error("Uncaught exception", { err }); shutdown("uncaughtException"); }); process.on("unhandledRejection", (reason) => { logger.error("Unhandled rejection", { reason }); });

–> What do you think of this code?

-

I have a pro account of Claude AI and i Tell him to optimize the code for the problem memory conssumtion)

– here his answer :

Root causes of the 2 GB RAM usage

1. Unbounded cache (

memory-cache)The original code stores every visited URL forever, with no cap and no reliable automatic expiration. On an active forum with thousands of unique links, this grows indefinitely.

Fix →

lru-cache: maximum 500 entries (configurable viaCACHE_MAX), automatic TTL expiration at 1 hour. Least-recently-used entries are evicted automatically.2. Redundant fetches per request

For every uncached URL, the original performs: OGS scrape + axios (full page) + cheerio + meta-parser + axios (favicon). If multiple users click the same link simultaneously, all of these are duplicated.

Fix → In-flight request deduplication (

inFlightMap): if 5 requests arrive foryoutube.com/xxxat the same time, only one fetch is fired — the other 4 await the same promise.3. Unbounded favicon buffering

The original downloads any favicon as an arraybuffer without checking its size first. A favicon URL pointing to a large image can load several MB into memory.

Fix → HEAD request first to check

Content-Length, then skip if > 50 KB (FAVICON_MAX). The HTML response body is also explicitly freed (htmlRes.data = null) once parsed.4. Axios with no timeout or size limit

Without a timeout, slow requests remain suspended in memory indefinitely. Without

maxContentLength, a 50 MB page is fully loaded into RAM.Fix →

REQ_TIMEOUT=8000msandMAX_BODY=2MBper outbound request.5. No compression

Without gzip, large JSON responses consume more bandwidth and remain in transit longer.

Fix →

compressionmiddleware.

Near-certain gains:

- The bounded LRU cache will prevent unbounded memory growth. This is most likely the main cause of the 2 GB if your forum has a large number of unique links.

- Timeouts will prevent stalled requests from sitting in memory indefinitely.

- Page and favicon size limits will eliminate pathological edge cases.

Traffic-dependent gains:

- In-flight request deduplication only helps if multiple users click the same link simultaneously — very useful on a busy forum, negligible on a small one.

What may not change:

- If the 2 GB comes from something else (a leak inside

open-graph-scraperitself, or Node.js not returning memory to the OS after objects are freed), this won’t fix everything. Node.js tends to hold onto allocated memory even after objects are released — this is normal V8 behaviour.

In that case, a simple complementary solution is to add an automatic nightly restart to the systemd service:

[Service] Restart=always RuntimeMaxSec=86400 # restart after 24h maxThis doesn’t fix the root cause but prevents accumulation over several days.

– The JS Code (server.js) :

"use strict"; /** * OGProxy - Optimized server * Improvements over original: * - LRU cache (bounded) instead of unbounded memory-cache * - Request deduplication (coalescing) to avoid redundant fetches * - Compression middleware * - Rate limiting * - Helmet security headers * - Axios timeout + max response size * - Favicon size cap + skip if too large * - Graceful shutdown * - Winston structured logging * - Joi input validation * - .env support * - Health check endpoint */ require("dotenv").config(); const express = require("express"); const cors = require("cors"); const helmet = require("helmet"); const compression = require("compression"); const rateLimit = require("express-rate-limit"); const morgan = require("morgan"); const winston = require("winston"); const { LRUCache }= require("lru-cache"); const Joi = require("joi"); const axios = require("axios"); const ogs = require("open-graph-scraper"); const cheerio = require("cheerio"); const metaParser = require("meta-parser"); const { URL } = require("url"); const path = require("path"); // ─── Config ──────────────────────────────────────────────────────────────── const PORT = parseInt(process.env.PORT || "2000", 10); const API_KEY = process.env.API_KEY || "YOUR_API_KEY_HERE"; const ORIGIN = process.env.ORIGIN || "https://your-forum.example.com"; const CACHE_MAX = parseInt(process.env.CACHE_MAX || "500", 10); // max entries const CACHE_TTL = parseInt(process.env.CACHE_TTL || "3600", 10) * 1000; // ms (default 1h) const REQ_TIMEOUT = parseInt(process.env.REQ_TIMEOUT || "8000", 10); // ms per outbound request const MAX_BODY = parseInt(process.env.MAX_BODY || "2", 10) * 1024 * 1024; // MB → bytes const FAVICON_MAX = parseInt(process.env.FAVICON_MAX || "51200",10); // bytes (50 KB) const RATE_WINDOW = parseInt(process.env.RATE_WINDOW || "60", 10) * 1000; // ms const RATE_LIMIT = parseInt(process.env.RATE_LIMIT || "30", 10); // req per window // ─── Logger ──────────────────────────────────────────────────────────────── const logger = winston.createLogger({ level: process.env.LOG_LEVEL || "info", format: winston.format.combine( winston.format.timestamp(), winston.format.errors({ stack: true }), winston.format.json() ), transports: [ new winston.transports.Console({ format: winston.format.simple() }), new winston.transports.File({ filename: "error.log", level: "error" }), new winston.transports.File({ filename: "access.log" }), ], }); // ─── LRU Cache (bounded) ─────────────────────────────────────────────────── const cache = new LRUCache({ max: CACHE_MAX, // max number of items ttl: CACHE_TTL, // auto-expire entries updateAgeOnGet: false, }); // ─── In-flight request deduplication ────────────────────────────────────── // If two requests arrive for the same URL before the first completes, // the second one waits for the first promise instead of spawning a new fetch. const inFlight = new Map(); // url → Promise // ─── Axios instance (shared, keep-alive, bounded) ───────────────────────── const http = require("http"); const https = require("https"); const axiosInstance = axios.create({ timeout: REQ_TIMEOUT, maxContentLength: MAX_BODY, maxBodyLength: MAX_BODY, headers: { "User-Agent": "Mozilla/5.0 (compatible; OGProxy/2.0; +https://github.com/phenomlab/ogproxy)", "Accept-Language": "en-US,en;q=0.9", }, httpAgent: new http.Agent ({ keepAlive: true, maxSockets: 10 }), httpsAgent: new https.Agent({ keepAlive: true, maxSockets: 10 }), }); // ─── Input validation ───────────────────────────────────────────────────── const urlSchema = Joi.object({ url: Joi.string().uri({ scheme: ["http", "https"] }).required(), }); // ─── App setup ──────────────────────────────────────────────────────────── const app = express(); // Security headers app.use(helmet({ contentSecurityPolicy: false })); // CORS app.use(cors({ origin: ORIGIN })); // Gzip compression app.use(compression()); // HTTP request logging (stream to winston) app.use(morgan("combined", { stream: { write: (msg) => logger.info(msg.trim()) }, })); // Rate limiting per IP app.use( "/ogproxy", rateLimit({ windowMs: RATE_WINDOW, max: RATE_LIMIT, standardHeaders: true, legacyHeaders: false, message: { error: "Too many requests, please try again later." }, }) ); // Static images directory app.use("/images", express.static(path.join(__dirname, "images"))); // ─── Health check ───────────────────────────────────────────────────────── app.get("/health", (_req, res) => { res.json({ status: "ok", uptime: process.uptime(), cacheSize: cache.size, inFlight: inFlight.size, memory: process.memoryUsage(), }); }); // ─── API key middleware ──────────────────────────────────────────────────── function requireApiKey(req, res, next) { const key = req.headers["x-api-key"]; if (!key || key !== API_KEY) { return res.status(401).json({ error: "Unauthorized" }); } next(); } // ─── Core fetch logic ───────────────────────────────────────────────────── async function fetchOGData(targetUrl) { // 1. OGS scrape const { result: ogsResult, error: ogsError } = await ogs({ url: targetUrl }); if (ogsError) throw new Error(`OGS failed: ${ogsError}`); // 2. Fetch HTML (with size cap) let metadata = {}; let faviconB64 = null; try { const htmlRes = await axiosInstance.get(targetUrl, { responseType: "text", decompress: true, }); const $ = cheerio.load(htmlRes.data); // meta-parser on the raw HTML try { const parsed = metaParser(htmlRes.data); metadata = parsed || {}; } catch (_) { /* non-fatal */ } // 3. Favicon – only fetch if small enough let faviconHref = $('link[rel="icon"]').attr("href") || $('link[rel="shortcut icon"]').attr("href"); if (faviconHref) { try { const base = new URL(targetUrl); const faviconUrl = new URL(faviconHref, base).href; // HEAD first to check Content-Length before downloading const headRes = await axiosInstance.head(faviconUrl).catch(() => null); const contentLength = headRes ? parseInt(headRes.headers["content-length"] || "0", 10) : 0; if (contentLength === 0 || contentLength <= FAVICON_MAX) { const iconRes = await axiosInstance.get(faviconUrl, { responseType: "arraybuffer", maxContentLength: FAVICON_MAX, }); const mime = iconRes.headers["content-type"] || "image/x-icon"; faviconB64 = `data:${mime};base64,` + Buffer.from(iconRes.data).toString("base64"); } else { logger.info(`Favicon too large (${contentLength}B), skipping: ${faviconUrl}`); } } catch (err) { logger.warn("Favicon fetch failed", { url: targetUrl, err: err.message }); } } // Free the HTML string early htmlRes.data = null; } catch (err) { logger.warn("HTML fetch failed (using OGS only)", { url: targetUrl, err: err.message, }); } return { ...ogsResult, metaProperties: metadata, faviconUrl: faviconB64, }; } // ─── /ogproxy route ─────────────────────────────────────────────────────── app.get("/ogproxy", requireApiKey, async (req, res, next) => { try { // Validate input let { url: targetUrl } = req.query; const { error } = urlSchema.validate({ url: targetUrl }); if (error) { return res .status(400) .json({ error: `Invalid URL: ${error.details[0].message}` }); } // Normalise (strip trailing slash etc.) targetUrl = new URL(targetUrl).href; // Cache hit? const cached = cache.get(targetUrl); if (cached) { res.setHeader("X-Cache", "HIT"); return res.json(cached); } res.setHeader("X-Cache", "MISS"); // Deduplicate concurrent requests for the same URL if (!inFlight.has(targetUrl)) { const promise = fetchOGData(targetUrl) .then((data) => { cache.set(targetUrl, data); return data; }) .finally(() => inFlight.delete(targetUrl)); inFlight.set(targetUrl, promise); } const data = await inFlight.get(targetUrl); return res.json(data); } catch (err) { next(err); } }); // ─── Centralised error handler ──────────────────────────────────────────── // eslint-disable-next-line no-unused-vars app.use((err, _req, res, _next) => { logger.error("Unhandled error", { message: err.message, stack: err.stack }); res.status(500).json({ error: "Internal server error" }); }); // ─── Start ──────────────────────────────────────────────────────────────── const server = app.listen(PORT, () => { logger.info(`OGProxy listening on port ${PORT}`); logger.info(`Cache: max=${CACHE_MAX} entries, TTL=${CACHE_TTL / 1000}s`); }); // ─── Graceful shutdown ──────────────────────────────────────────────────── function shutdown(signal) { logger.info(`${signal} received – shutting down gracefully`); server.close(() => { logger.info("HTTP server closed"); process.exit(0); }); // Force exit after 10 s if still busy setTimeout(() => { logger.warn("Forcing exit after timeout"); process.exit(1); }, 10_000).unref(); } process.on("SIGTERM", () => shutdown("SIGTERM")); process.on("SIGINT", () => shutdown("SIGINT")); process.on("uncaughtException", (err) => { logger.error("Uncaught exception", { err }); shutdown("uncaughtException"); }); process.on("unhandledRejection", (reason) => { logger.error("Unhandled rejection", { reason }); });

–> What do you think of this code?

@DownPW yes, I’ve noticed similar and it is to do with the cache because it is stored in memory and not on disk…

However, when I wrote the code, I did allow for a maximum cache age so I’m puzzled as to why this isn’t being honoured.

A review of the code is long overdue to be honest, but it’s slipped down the priority list. The fastest way to resolve this in the short term is to perform a daily restart of the service which will flush the cache.

-

Don’t hesitate to tell me what you think of this modified code.

Until next time.

-

@DownPW Looks ok for the most part, but this looks like it’s truncated?

return { ...ogsResult, metaProperties: metadata, faviconUrl: faviconB64, }; } -

@DownPW Looks ok for the most part, but this looks like it’s truncated?

return { ...ogsResult, metaProperties: metadata, faviconUrl: faviconB64, }; }@DownPW Looks ok for the most part, but this looks like it’s truncated?

return { ...ogsResult, metaProperties: metadata, faviconUrl: faviconB64, }; }exactly, i must see the code, i tell you soon

-

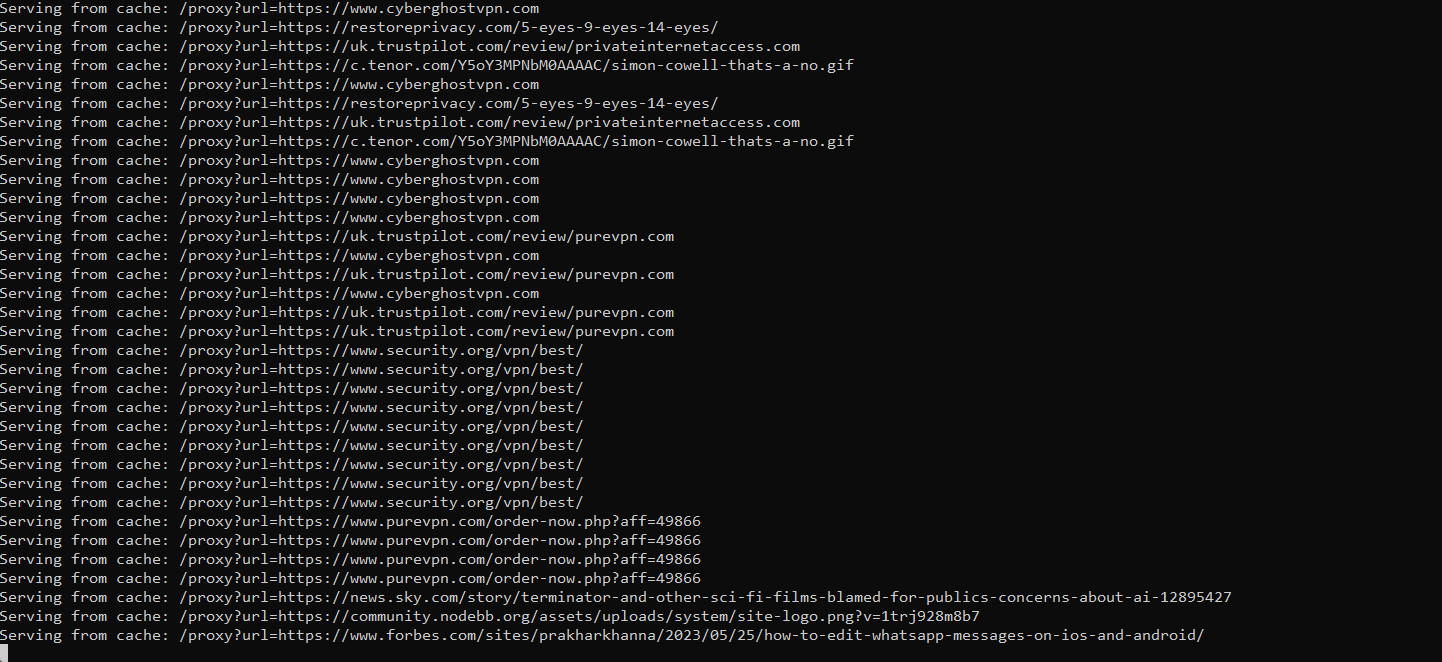

I was experiencing 500 (Internal Server Error) responses from the proxy, visible in the browser console:

GET https://proxy.xxx-xxx.net/ogproxy?url=https%3A%2F%2Fzupimages.net%2Fup%2F26%2F16%2Fld8h.jpg 500 (Internal Server Error)After investigation, I found two root causes:

1. Direct image URLs being sent to the proxy

The custom JavaScript responsible for detecting links and sending them to the proxy was using the following regex to exclude direct image links:

var fileExtensionPattern = /\.(png|jpeg|gif|pdf|docx?|xlsx?|pptx?|zip|rar|svg)$/i;Note that .jpg and .webp were missing from the pattern. As a result, links ending in .jpg were not recognized as direct image URLs and were forwarded to the OGProxy, which then tried to scrape them as web pages using open-graph-scraper — causing a 500 error.

The fix was to add the missing extensions:

var fileExtensionPattern = /\.(jpg|png|jpeg|gif|pdf|docx?|xlsx?|pptx?|zip|rar|svg|webp)$/i;2. The proxy not following HTTP redirects

Some image hosting services (e.g. zupimages.net) return a 301 redirect from the bare domain to www. When curl follows the redirect manually the image loads fine:

curl -IL https://zupimages.net/up/26/16/ld8h.jpg HTTP/2 301 → https://www.zupimages.net/up/26/16/ld8h.jpg HTTP/2 200However, the proxy’s axios.get() call does not handle this gracefully when open-graph-scraper is involved, resulting in a 500 error being returned to the client.

My questions are:

- Is there a known best practice for handling redirect chains in open-graph-scraper?

- Would passing maxRedirects or followRedirect options explicitly to axios or ogs fix this reliably?

- Is there a cleaner way to pre-filter direct image/file URLs before they reach the proxy, ideally at the NodeBB plugin level rather than in custom JS?

Thanks in advance.

Hello! It looks like you're interested in this conversation, but you don't have an account yet.

Getting fed up of having to scroll through the same posts each visit? When you register for an account, you'll always come back to exactly where you were before, and choose to be notified of new replies (either via email, or push notification). You'll also be able to save bookmarks and upvote posts to show your appreciation to other community members.

With your input, this post could be even better 💗

Register LoginRelated Topics

-

-

-

-

-

-

Link Not Working

Solved Customisation -

-